Every enterprise L&D leader has lived through this story. Somewhere between 2019 and 2024, their organization launched a major skills initiative. A consulting firm was engaged. A task force was assembled. Cross-functional workshops were held. Taxonomies were built. Platforms were purchased. The CEO used the words “skills-first” in a town hall.

And today, most of those initiatives are functionally dead.

Not officially killed—that would be too clean. They’re in that liminal space where the SharePoint folder still exists, the platform still has a license and someone in HR can technically pull a report. But nothing in the organization operates differently because of it. Training still runs on job titles. Skill gaps are still discovered reactively. Nobody can answer the CEO’s question about whether L&D is building the capabilities the business actually needs.

This isn’t a fringe pattern. It’s the dominant pattern. And if we’re honest about why, the lessons are more architectural than strategic.

Five Reasons Skills Initiatives Break Down

After watching this cycle play out across industries such as pharma, financial services, tech and manufacturing, the failure modes are remarkably consistent. They’re not about picking the wrong skills or the wrong platform. They’re structural.

Failure Mode 1: The Taxonomy Trap

Organizations usually take 6 to 12 months to build their competency model and map thousands of skills across hundreds of jobs. The design seems sound: clear hierarchies, defined proficiency levels and agreement among all parties involved. Seems like real progress, no end of workshops and working groups. But while the framework takes shape, the focus stays on structure rather than how these skills are actually applied in daily work.

But taxonomy is a classification system. It answers the question, “What skills matter?” It doesn’t answer “Who has them?”, “Are they improving?”, or “What should we do about it?” The framework describes the terrain. It doesn’t move anyone through it. And by the time the taxonomy is “complete,” the roles it was mapped to have already shifted.

Failure Mode 2: Self-Reported Data as Foundation

The dirty secret of most skills platforms is that the data underneath them is self-reported. Employees rate themselves once a year, often during a performance cycle when they have every incentive to be generous. There’s no multi-source validation. No confidence scoring. No trajectory tracking.

Every decision based on this data, whether it is personalized learning paths, gap analysis, or capability planning, is grounded in assumptions dressed up as intelligence. You can’t personalize learning to actual proficiency if you don’t actually know proficiency. And the organization never discovers this because nobody closes the loop.

Failure Mode 3: The Data Silo Problem

Skills data is stored in the HRIS, learning data in the LMS and performance data in another platform, fragmented across disconnected systems. Without a connected structure, integration efforts stretch on, consuming time while delivering only limited clarity.

The result is that every decision that requires connecting skills to outcomes requires someone to manually stitch together data from multiple systems. A simple question like “Which programs actually improved negotiation skills in our sales team?” becomes a multi-week analytics project. Skills remain an HR artifact trapped in a spreadsheet, not an operational reality that drives daily decisions.

Failure Mode 4: No Action Infrastructure

Some organizations get further than most. They validate some skills data. They build dashboards. Leadership gets visibility. And then, nothing changes operationally.

This is the most insidious failure mode because it appears to be successful from the outside. There’s a dashboard. It’s updated quarterly. People reference it in meetings, but the training team still responds to ad hoc requests. Learning paths are still generic. Nobody gets a proactive alert that says, “Your Q3 product launch requires 40 ML engineers and you currently have 28 qualified.” The gap between visibility and action is where most skills initiatives go to die quietly.

Failure Mode 5: AI Without Data Architecture

The most recent and costly failure pattern is layering AI tools onto fragmented systems. It sounds compelling. AI will solve data issues, drive personalization at scale, and predict skill gaps. But without strong foundations, this promise rarely translates into real impact.

But AI amplifies what you feed it. If the underlying data is self-reported, siloed and disconnected from outcomes, AI doesn’t fix that; it scales it. You get faster noise, not smarter decisions. Personalization without verified context is just segmentation by job title with a more sophisticated label. Prediction without historical patterns connected to outcomes is speculation with a confidence interval.

What Execution Actually Requires: From Insight to Action

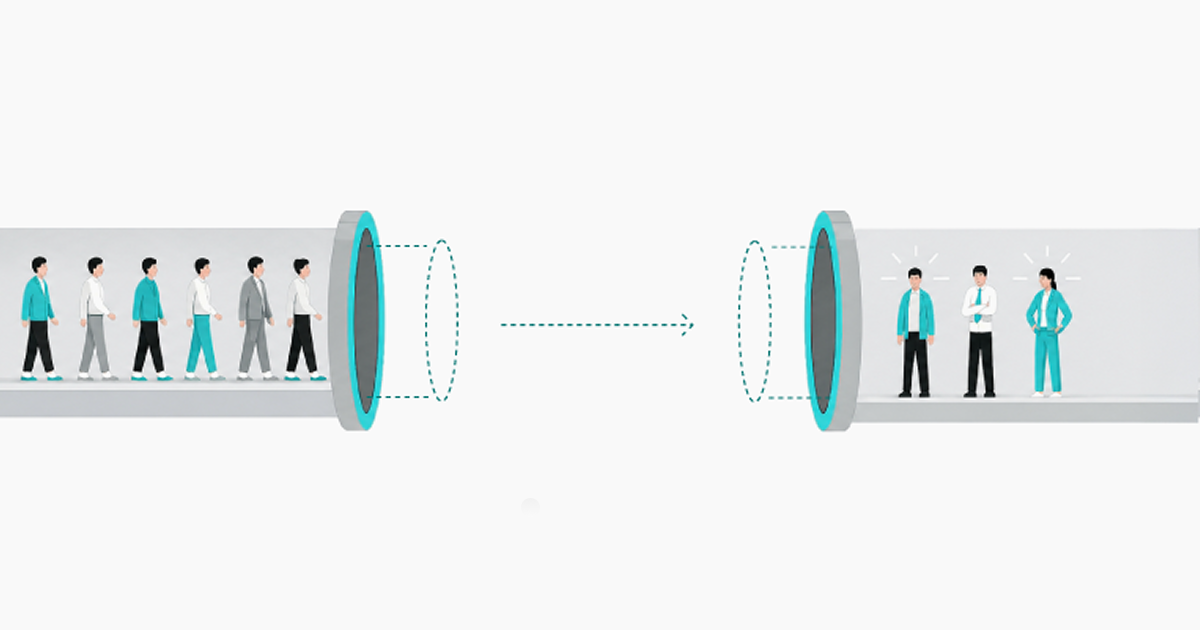

All five failure modes trace back to the same fundamental error: organizations invested in the framework layer without investing in the execution layer.

They answered, “What skills matter?” without building the infrastructure to answer, “what’s true,” “what’s connected,” and “what should we do about it.”

Execution requires three capabilities that most skills initiatives never address:

- Validation: Skills must be verified through multiple sources, assessments, manager input, project application, performance data, not just self-assessment. Each claim needs a confidence score. Proficiency needs to be tracked as a trajectory, not captured as a snapshot.

- Visibility: Skills data must flow in real time across HRIS, LMS and performance systems. Not quarterly reports assembled by hand. Not dashboards that lag by 90 days. Live operational visibility into organizational capability.

- Action: Skills insights must trigger operational decisions—personalized learning paths based on validated gaps, proactive alerts when capability shortfalls threaten business plans, predictive interventions that get ahead of problems rather than reacting to them.

Without all three in place, the framework becomes static. Well-organized on paper but disconnected from the daily details that build real capability.

What High-Performing Organizations Do Differently

The organizations where skills-based transformation actually took hold—and they do exist—didn’t start with a better taxonomy. They started with better infrastructure. The shift is conceptual: from skills as a static framework to skills as a living intelligence layer.

In these organizations, something fundamentally different is happening:

- Training teams can personalize to validated proficiency, not job titles. Two people with the same role but different skill levels get different learning paths because the system knows the difference.

- Training teams can prove impact with data, not hope. They can correlate skill development to performance outcomes with confidence scores, not completion rates dressed up as ROI.

- Training teams can be proactive rather than reactive. They can predict capability gaps months before they hurt the business, because the intelligence layer connects skills data to business plans in real time.

What matters most is how the intelligence grows. After 12–24 months, the system delivers deeper insights, stronger organizational visibility and faster, more reliable predictions, driven by real performance data. That’s the difference between running a skills program and building a lasting edge.

Skills initiatives fail through five consistent patterns: over-investing in taxonomy without execution infrastructure, relying on self-reported skills data, leaving skills data trapped in disconnected systems, building dashboards without action mechanisms and bolting AI onto fragmented data. The common root cause is investing in the framework layer (what skills matter) without building the execution layer (how to validate, connect and act on skills operationally).

Conclusion

If your organization has been through a skills initiative that hasn’t changed how L&D operates, the uncomfortable question isn’t “was our framework wrong?” It probably wasn’t. The question is: did you build the execution layer, or just the classification layer?

The organizations that get this right in the next 12–18 months will have a learning operation where skills data drives decisions automatically, intelligence compounds over time and L&D shifts from reactive order-taking to strategic workforce capability building. The ones that don’t will add another initiative to the graveyard.

The framework wasn’t the problem. The infrastructure to make it real was never built. That’s the lesson from the graveyard and it’s not too late to learn it.

At Infopro Learning, we help organizations move from frameworks to real execution, connecting the big picture to daily details so teams build skills faster, decisions improve and capability scales across the business. If your skills strategy isn’t changing how work gets done, it’s time to fix the execution layer.

Frequently Asked Questions (FAQs)

-

remove Why do most skills-based transformation initiatives fail?Most skills-based transformation initiatives fail because, while investing in the framework layer, building taxonomies and creating competency models, there is no investment in the execution layer, which includes validated skills data, real-time cross-system visibility and automated action infrastructure. While the framework tells you what matters, execution tells you how to validate what is true, what is connected and what is needed.

-

add What are the most common failure patterns in skills initiatives?Five patterns emerge that are consistent in their description: “over-investing in taxonomy without operational infrastructure support,” “relying on self-reported skills data without validation,” “trapping data in disconnected HRIS/LMS/performance management systems,” “designing dashboards without mechanisms for action,” and “adding AI tools to fragmented data foundations.” However, they share a common underlying cause: a lack of execution infrastructure.

-

add How do successful organizations make skills-based transformation work?Effective organizations start with infrastructure, not taxonomy. That means developing a validated model of proficiency based on multiple data sources, integrating skills data in real time across multiple systems and automating action loops that leverage skills intelligence to inform business decisions, such as personal learning plans, proactive gap management and predictive workforce interventions. This compounds into a competitive advantage over time.